2 Chapter 1: Why Parallel Computing?

Microprocessor performance was increasing rapidly until 2002. Now the rate of increase has dropped. The solution is to use multiple processors.

The challenge is letting the software utilize the multiple cores or processors. There are two approaches

- Rewrite serial programs to be parallel

- Write translation programs that automatically convert serial programs into parallel programs. (Very Difficult; No Success yet)

2.1 Global Sum Algorithm Example

Consider the example of an algorithm that sums up a list of \(n\) variables while having \(p\) cores (where \(p << n\)). Each core can sum up \(\frac{n}{p}\) variables and send the result to the master core. The master core then sums up all the results.

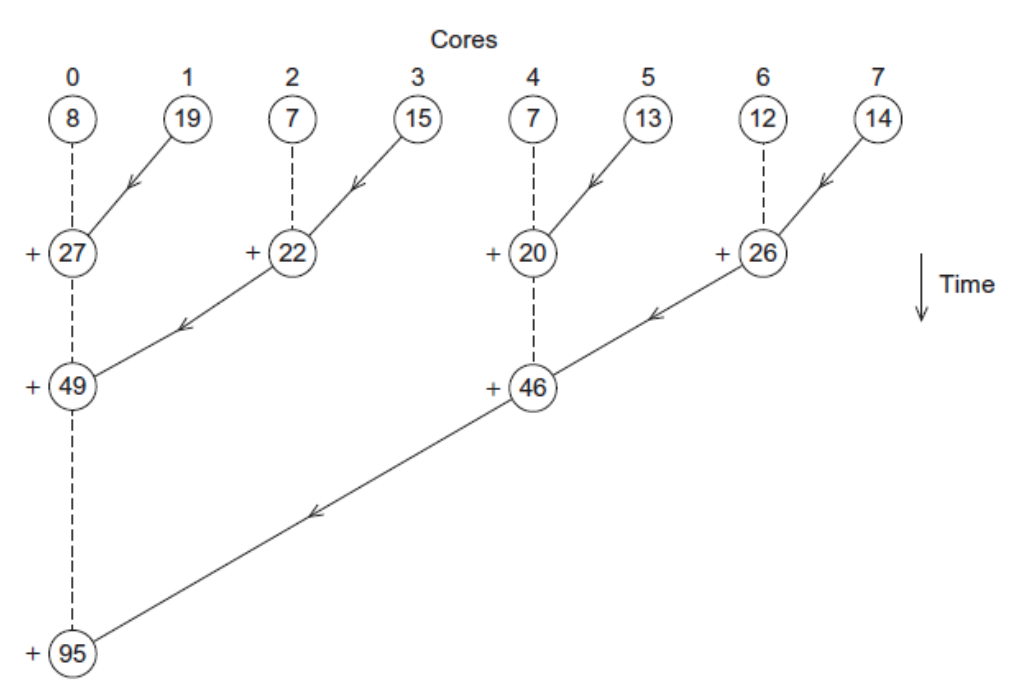

There is an even better (faster way). Instead of letting the master core handle all the work of summing up the results, divide it on the cores. Let core 0 sum its result with 1 and core 2 with 3 and so on. And keep moving up. Figure 2.1 demonstrates this idea visually.

2.2 Parallelism Types

There are two types of parallelism.

Task Parallelism is partition the tasks carried out for solving a problem among the cores.

Data Parallelism is dividing the data used in solving the problem among the cores and each core performs simillar operations on its part of the data.

For example, consider the task of marking 300 exam papers of 15 questions by 3 TAs. Task parallelism in each TA marking 5 questions for all the papers. Data parallelism is each TA marking the 15 questions for only 100 papers.

2.3 Coordination

Cores usually need to coordinate their work. Three important definitions are:

Communication: One or more cores send data to another core

Load Balancing: Share the work evenly among the cores

Synchrnization: Make sure cores do not get too far ahead of the rest. Notice that each core runs on its own speed.

2.4 Parallel Systems Types

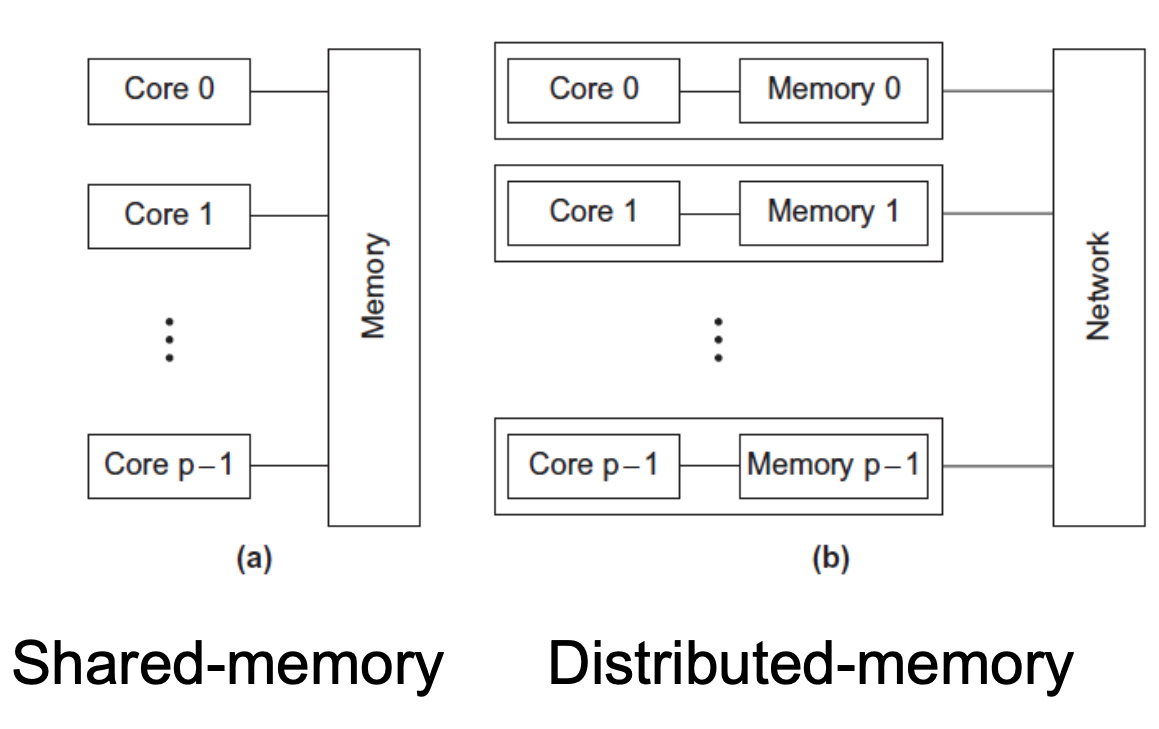

There are 2 types of parallel systems

2.4.2 Distributed Memory

Here, each core has its own private memory. Communication happens by explicitly sending messages across a network.

2.5 Important Terminology

Concurrent Computing: A program is one in which multiple tasks can be in progress at any instant. This usually includes a single processor running multiple processes concurrently using time-sharing.

Parallel Computing: A program is one in which multiple tasks cooperate closely to solve a problem. Typically, this includes multicore processors.

Distributed Computing: A program may need to cooperate with other programs to solve a problem. This usually includes a cluster of multiple separate computers.